Local Memo: Google Releases Gemini AI Model

In this week’s update, learn about Google’s new Gemini AI model; Meta’s updated AI features; the launch of Deep Search from Microsoft; hours of operation as a local ranking factor; suggestions for creating Google Business Profile photos; and the popularity of AI with digital agencies.

Google Releases Gemini AI Model

The team at Google DeepMind has announced the release of Gemini, an advanced AI model that the company claims is more powerful than OpenAI’s GPT-4. Gemini is designed to be multimodal and can work with “text, code, audio, image and video,” according to the announcement.

As of Gemini’s December 6 launch, it is live as the new backbone of Google’s Bard chatbot, and powers features in the new Pixel 8 Pro smartphone, such as Summarize in the voice recorder app. Gemini is also now augmenting the speed and quality of Google’s Search Generative Experience (SGE). Google says that Gemini will be added “in the coming months” to an array of other products, including Search, Ads, and Chrome.

Greg Sterling speculates that Gemini’s natively multimodal approach and its ability to create custom interfaces based on the needs of each conversation may augur a future where Google moves beyond the traditional search interface. See the video below for a demo of these capabilities.

Meta Announces Expanded AI Features

On December 6, the same day as Google’s Gemini launch, Meta announced a new set of AI features. Meta AI, launched earlier this fall in Messenger, Instagram, and WhatsApp as a chatbot assistant with a range of celebrity lookalike avatars, will also now be integrated with a variety of Meta features, for instance, helping to phrase or edit a post or to suggest topics to discuss in a group.

The image generation feature called “imagine” will allow users to “reimagine” images created by others in an interactive manner. The “imagine” feature is now live outside the chatbot experience as well, at imagine.meta.com. Meta AI will also add Reels content to chats, for instance recommending things to do in Tokyo with relevant videos.

Reels content in Meta AI chat, courtesy Meta

Microsoft Launches Deep Search

Microsoft is introducing a new tool called Deep Search that uses generative AI to help users with complex queries. Rather than relying solely on search rankings for the terms entered by the user, Deep Search looks at a wider set of results based on the possible intent of the searcher.

For example, a search for “how do points systems work in Japan” might give the user options to drill down on several possible subtopics, such as loyalty programs, traffic violations, and immigration policy. Upon choosing the intended subtopic, Deep Search will then suggest results based on various inferred interests, such as “best loyalty cards for travelers in Japan” or “redeeming loyalty cards in Japan.” Deep search will appear for random Bing users while it is in a testing phase and is not intended to appear in every search.

Demo of Deep Search, courtesy Microsoft

Hours as a Local Ranking Factor

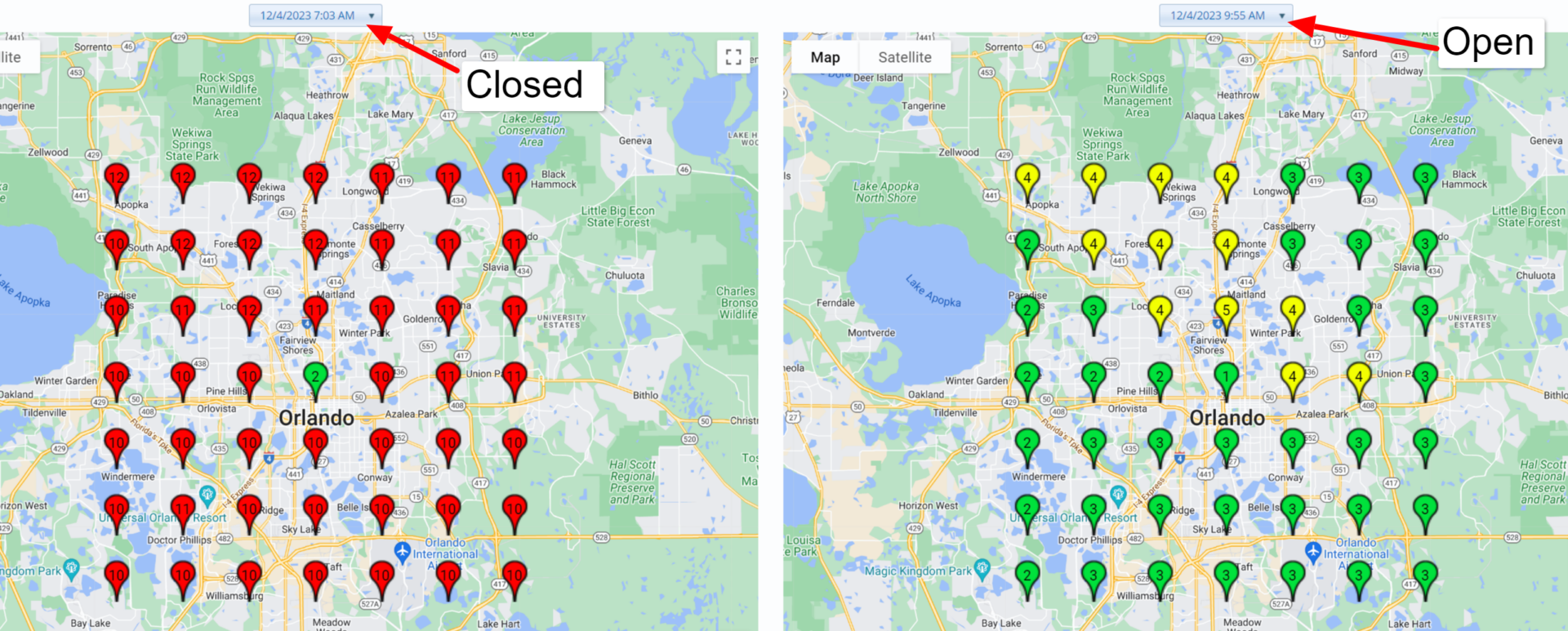

In a blog post as well as a recent webinar, Joy Hawkins states that after Google’s November core algorithm update, hours of operation began to function as a local ranking factor, causing the rankings to fluctuate for many businesses that appear in search when open but not when they are closed.

Greg Sterling points out that others are not seeing this, while on X some users have claimed that hours is not new as a ranking factor but has been functioning as such for some months. In fact, our own Michael Snow has observed the same thing; perhaps, if anything, the November update made this factor more ubiquitous.

Courtesy Sterling Sky

Options for Google Business Profile Photos

Continuing her trend of original and helpful posts, Miriam Ellis shares tips and examples for adding photo content to Google Business Profiles. In addition to the more standard exterior and interior photos of a business, Ellis recommends adding photos that showcase amenities such as handicapped accessibility or pet friendliness; photos that showcase a business’s longevity or mission statement; photos that display stocked shelves as well as individual products; and photos showing staff interacting with happy customers, along with many other recommendations that are worth checking out.

How Many Digital Agencies Use AI? All of Them

A new survey of more than 200 digital agency leaders conducted by Duda is notable in finding that 100% of the agencies surveyed are using AI, and seeing efficiency improvements as a result. Despite this, 84% of agencies are concerned about keeping up with the pace of AI development in 2024. Agencies are mostly focused on using AI for content planning, creation, and updating.

Damian Rollison

Subscribe to Local Memo!

Signup to receive Local memo updates and the latest on localized marketing, delivered weekly to your inbox.